Pressing Buttons

I want Ty to type on any keyboard using a camera to identify and detect the keys. But I’m a little stuck on the machine learning and I want visible progress on this project by the end of the year. Maybe I should spend some time on the robotics instead.

I read in Javascript Robotics about making a keyboard typing robot with popsicle sticks, a couple of motors, and an Arduino. They assume a fixed location keyboard and only a little bit of code. It would hilarious if Ty could type “Happy New Year!” And they made it look easy. They lied.

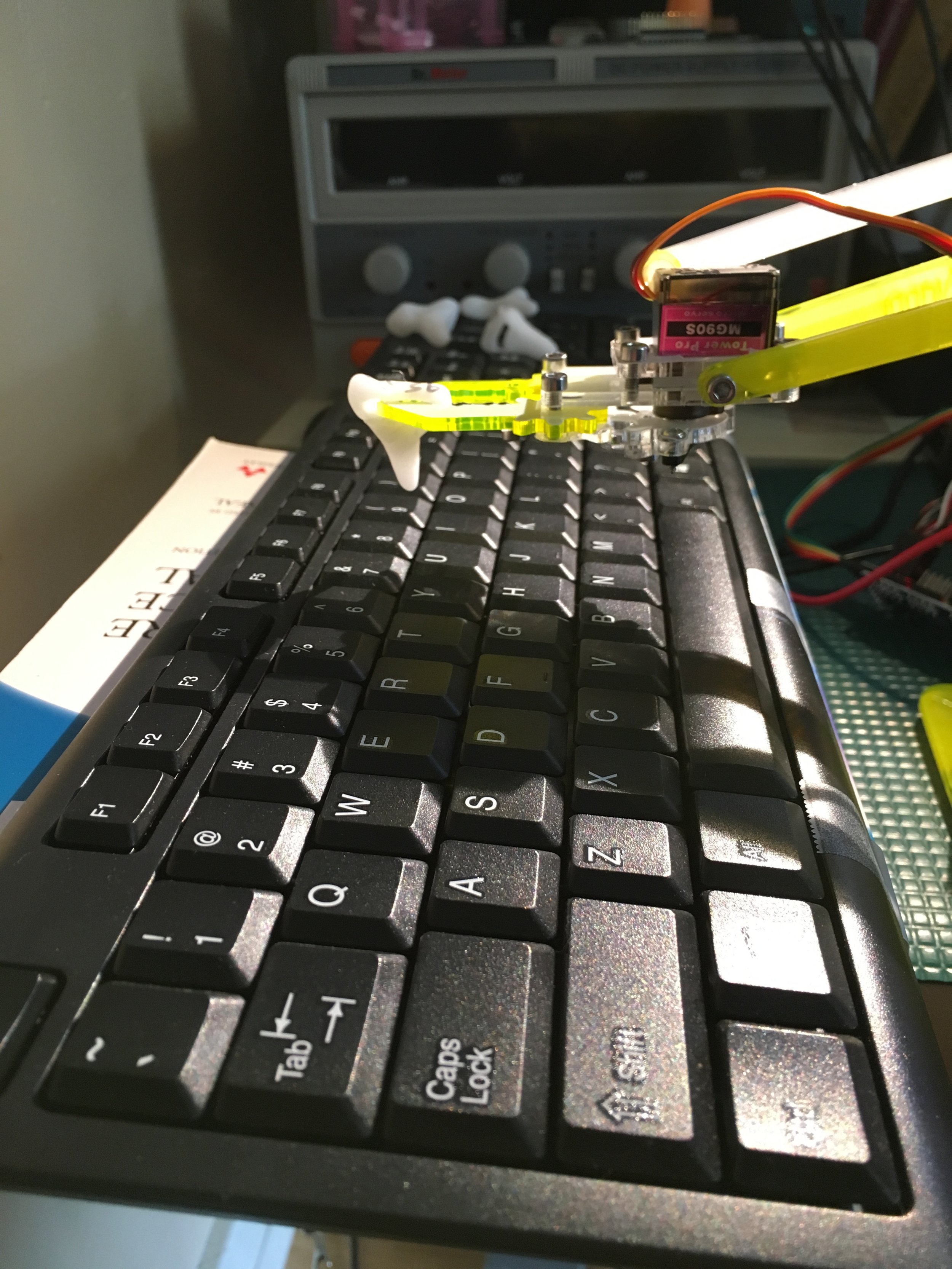

A quick reminder: the MeArm motors are connected to a servo controller, and current monitoring resistors connected to an ADC (see past posts). The servo controller and ADC are I2C connected to the TX2, the completely overpowered controller board that will one day do machine learning things. I do not have encoders on the joints (cue foreboding music).

Initially, I leaned a keyboard against a wall, moved the claw in line with a key, and then moved the claw toward the wall to press the key. Poor little guy didn’t have the oomph to press keys. (Apparently fingers are pretty strong, who knew?)

Wising up that gravity was going to be my friend, I laid the keyboard flat on the desk (like you would normally use a keyboard). The claw isn’t oriented to go down so I determined I needed some sort of finger to press the key. I tried a number of different finger-like objects, they were too heavy, too pointy, not pointy enough, too slippy, too squishy, and so on. I realized I needed something that the claw gripper could grip on and I thought a wine cork would fit but I didn’t have any handy at the time so I used a wine stopper. This pressed a button. It made me giggle so I did it a bunch more, and even called Chris in to watch it.

I started laying out the map of my keyboard: m is at [x, y, z] and backspace is at a different [x, y, z]. I eventually realized this wasn’t going to work because the arm wasn’t long enough to get to q, given where the base was. I moved the keyboard closer and then the arm didn’t have enough oomph to press space at that close-angle. Feeling like Goldilocks, I moved the keyboard until it seemed like the claw could get to everything. Then I relaid out the map, painstakingly figuring out where m and backspace really were (also, b, n e, and o because that’s how far I got in my random walk of the keyboard before I realized q and space were a problem at this position).

It was working but this was with me saying go to m’s [x, y, z] then go to q and then backspace. When I asked it to press the keys in a different order, they didn’t work: the initial position mattered to repeatability. I added a neutral location that the arm goes to between keys. Now, every time the arm presses a button, it comes from the same direction.

That worked for awhile and I put together a map of most of the keyboard. I got the arm to type out “hello” and got a video of it. It felt like things were starting to work.

However, sometimes, when I’d go back to press other keys, sections of the keyboard didn’t stay mapped properly. I must have hit a key too hard and at the wrong angle because my wine-stopper finger had tilted a little in the claw. While it was solid in the Y and Z directions, it could tilt back and forth around the X direction. I needed a better finger.

And so, for science and engineering, I opened a bottle of champagne. I carved the cork to have a resting place for the claw to avoid the tilting. As a bonus, the cork-based finger was lighter. I remapped the keys because I didn’t trust the map I had.

I know that mapping out the keys this way is wrong. I should use a camera. But at this point, I wanted to do this. I know it is possible. Even if this isn’t my goal path, I want to make this work. I can’t let it best me.

As I remapped and checked the new mappings, I noticed the arm was jumping sometimes, when it went fast to q or the = key. It would unbalance and hop a little. Figuring that this might be a part of my calibration problem, I taped the arm’s base to my work surface at a few key points.

This was working well enough that I started adding features to my pressKey function. First I made a pressKey function to go from neutral to the key’s XY position, then drop to the key’s Z location for the press, then back to neutral. Unfortunately, how can the robot tell when it has completed each move so it can start the next one?

Initially, I added current monitoring so I could stop the system if the current was reading too high or adjust the position if it was sitting in a high-current state (sometimes if the arm moves up then down, the servos stop struggling). These were intended to be slow-detections. Of course, now I decided to try to use them to detect when the system was done moving (not a great idea) and when the button was being pressed (not as bad an idea). Encoders would be easier but it was working so well before they didn’t seem necessary. Of course, it was working poorly after I sped up the system and started depending on the current response. But it was still mostly working.

At some point, my new non-tilting finger tilted and the new map skewed and went bad. I could have fixed it but I wanted a system I could trust. This was a great excuse to try out this hot-water moldable plastic that has sitting on my desk for months. The plastic is fun and worked as advertised. Though, my crafting skills are, um…, poor. I make little fingers and most of them still tilted in one direction or another. I finally hit on the idea of making a shape that can’t turn (which turned out to be something that goes around the claw instead of something that is gripped by the claw). They ended up looking like little shark teeth with elliptical holes.

After redoing the the mapping yet again, I still wasn’t happy with my pressKey function. It seemed like it needed more directions to make each keypress consistent, more than just go to the pressed key location. Assuming a neutral position, I decided I wanted it to have a more thoughtful, more planned motion:

Move to the key’s XY position, waiting for the current to indicate motion is finished (or a timeout proportional to the distance traveled)

Move to the key’s Z position, waiting for a short time or until the current indicated an obstacle (aka “the key is pressed”)

Raise to the nearly original Z position, waiting for the current to indicate motion is finished (or a short time out)

Move to neutral X,Y, Z+(a bit), waiting for current to indicate done (or a timeout proportional to distance traveled)

Fall to neutral XYZ to keep servos happy, waiting for current to indicate done (or a short timeout)

I have noticed many times that complexity builds up one tiny step at a time until you look at it and say, “That is not at all what I intended.” As I started to try to refine the system, it kept getting more fiddly and more prone to strange corner case errors. Worse, the motion has gotten janky and jerky, and lost its fluidity.

Is it disturbing that when I told my robot arm to type “hello”, it typed “help”?

In the process of trying to create a motion planner, I switched from straight-line motion which took small steps with lots of waits to telling the servos to jump to where I wanted. This is a reflection of how my arm-controlling code works: my function gotoPoint looks at the distance between the points and takes small steps to get there, waiting a fixed time between each step; the other function goDirectlyTo determines the joint angles needed to achieve a point and then sets the servos to that directly, with no intermediate steps. (Note that gotoPoint calls goDirectlyTo many times.)

But my key-press motion planner only called goDirectlyTo. That works well for short distances but not as well for long ones. Even if I made the perfect map, had the perfect finger for my claw, and always returned to a neutral position, my key pressing function had a lot of error. When I switched back to gotoPoint, the whole system slowed down but became a lot more stable, even when I removed the current monitoring for checking that motion stopped,

After some time remapping again, I noticed my keyboard had shifted. That was easy to fix. I taped it to a large book (ARM Manual, 2nd Ed; pun completely intended) and shifted it back until the map worked again. Then I noticed the arm’s tape was pulled up and completely ineffective. Impatient with the constant errors, I got a C-clamp and, trust me, the arm’s base is not moving again until I let it.

I started mapping again, wondering how to make the system repeatable and stable in this configuration, pondering how I can use the camera to avoid the mapping errors, and considering how to make a true-to-life map file and adapt the system to use that with learned scale and offsets for position and rotation.

For much of this process, I have been heading in what I am certain is the right direction and getting worse results the further I go. Finally, I was starting to see more consistency! I was going to win!

After I fix each problem, I see that it was so obviously wrong and I wonder how I could have been so stupid/ignorant/thoughtless as to have believed it could work that way. I tell people that resilience is a skill that must be practiced but the voice in my head crankily comments on the facts, of course, that the correct finger tool was critical and that, of course, I needed to make sure the keyboard and arm must not move with respect each other. Clearly, only an idiot would fail to commit working code, instead checking in only when everything was broken. I know, I know, I’m learning, which is the real goal; a typing robot is pretty silly, after all.

I finished about half the keyboard map before the end of the day. It could type “hello” and “z] qm=.” I carefully shut off the power, making sure not to bump anything, confident that I could finish in the morning.

Powering on this morning, gremlins came and changed things, making the keyboard wider somehow. If I get the letter h working well, the arm won’t reach to e. Oh, I know it is more likely that the motors and plastic need to be warm to get to the same map that I had. But that means I can’t do the map, and this path isn’t going to work.

Part of me is glad, this unintelligent typer wasn’t what I really wanted. The other part needs more coffee before optimism has a chance of returning.

And then it dawned on me that maybe Ty didn’t type “help” because he needed help. Maybe he typed it for me.

If you want to see the code for this, it is in a github repo.